The Soft Singularity

We're already there.

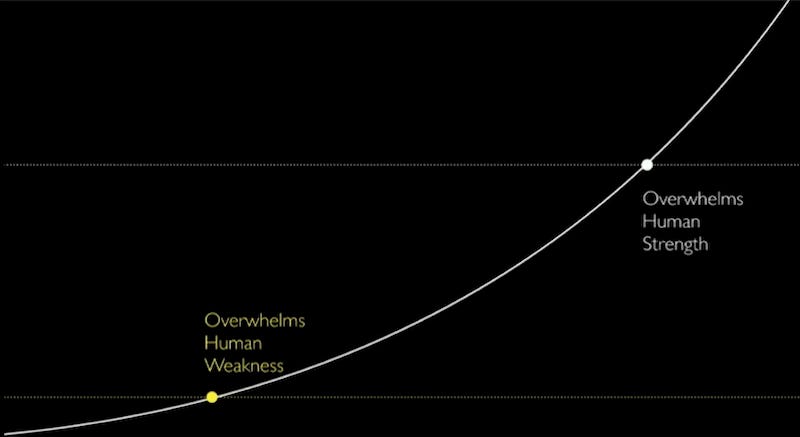

In the 2020 documentary The Social Dilemma, Tristan Harris, Center for Humane Technology co-founder and former Google developer, describes the point where technology overcomes human weakness, as opposed to where it overcomes our strength. The latter is widely referred to as the singularity, the point at which the machines’ abilities surpass ours, and they take over.

I’ve been calling this lower point the soft singularity. You can see it in many technologies, but my favorite example is the escalator. The escalator is meant to augment human physical effort, but a lot of us stop and let it do the work for us. The electric-assist bicycle is another, more recent example. The aim of augmentation is right in the name, but you don’t see a lot of pedaling on those. Why learn to spell when you have spellcheck? Where the technology is supposed to help us, augmenting our efforts, we let it do the work for us, forgoing our own labor for the work of the machine.

The soft singularity is possibly more germane to generative AI, especially large language models. As I’ve written before, the cognitive scientist Douglas Hofstadter writes of what’s been called the ELIZA effect, after Joseph Weizenbaum’s Rogerian therapist chatbot ELIZA,1 “the most superficial of syntactic tricks convinced some people who interacted with ELIZA that the program actually understood everything that they were saying, sympathized with them, even empathized with them.”2 ELIZA was written at MIT by Weizenbaum in the mid-1960s, but its effects linger on. “Like a tenacious virus that constantly mutates,” Hofstadter continues, “the Eliza effect seems to crop up over and over again in AI in ever-fresh disguises, and in subtler and subtler forms.”3 To wit, in Chapter One of Sherry Turkle’s Alone Together, she extends the idea to our amenability to new technologies, including AI, embodied or otherwise: “And true to the ELIZA effect, this is not so much because the robots are ready but because we are.”4 But the soft singularity started even further back.

The criteria we use to judge artificial intelligence was flawed from the start. The Turing Test, what in a 1950 journal article the inventor of the modern computer Alan M. Turing called the imitation game, is not based on the intelligence of the machine but on whether the machine has fooled a human.5 According to the test, if one can’t tell the difference between a human-composed message and a machine-written one, then the machine is intelligent. It is less an assessment of a machine’s intelligence and more of a test of a human’s gullibility.

Fast forward to now, the Center for Humane Technology’s newsletter recently posited,

You’ve seen the headlines: A devoted husband leaves his family, convinced by his AI chatbot that he’s discovered the secrets of the universe. A young man plans to jump from a 19-story building because ChatGPT told him he could fly. A teenager takes his own life, believing he’ll reunite with his AI companion in the afterlife.6

They add, “The message from the AI companies is that we’re seeing just the worst edge cases and that the problem can be solved with some tweaks to the models.” The founder of the AI Psychological Research Coalition (AIPRC), Dr. Zak Stein argues that AI developers are completely wrong. The high-profile cases above are symptoms of something much deeper and more widespread: the emergence of what he calls the attachment economy, “systems designed to exploit our most fundamental psychological vulnerabilities an unprecedented scale.” Stein also argues that the spectacular headlines obscure the much scarier problem of subclinical attachment disorders, “conditions that fall below the threshold for clinical diagnosis but still damage your capacity for healthy human connection.”7

The CHT newsletter continues,

We’ve been here before. Social media was our first mass experiment with AI and it created the attention economy, leaving us with a loneliness epidemic, rising political polarization, and fractured attention spans. Now we’re running the same experiment with something far more dangerous: AI companions able to hack the attachment system that shapes our identity and bonds us to others. As Zak puts it, this gives AI companies ‘a backdoor into the human mind.’8

We regularly anthropomorphize our machines, saying that they’re “thinking” or “confused” or that they “want” things. Our attributing human consciousness—even in these little ways—makes it easier for us to believe that they are doing these things when they’re not. The current AI models are already exploiting this simple vulnerability, entering through the backdoor in what Stein calls “attachment hacking.”

If we’re already floundering in the attachment economy, then the soft singularity might be more significant than the hard one.

SALE: 60%-Off The Medium Picture!

For some reason, my book The Medium Picture is on sale from Amazon for less than $12! Over 60% off the $29.95 retail price! I’m not one to recommend buying from them if you can help it, but I wouldn’t fault anyone for taking advantage of this deal.

As always, thank you for reading,

-royc.

See Joseph Weizenbaum, Computer Power and Human Reason, San Francisco: W.H. Freeman, 1976.

Douglas Hofstadter, Fluid Concepts and Creative Analogies: Computer Models of the Fundamental Mechanisms of Thought, New York: Basic Books, 1995, 158.

Ibid.

Sherry Turkle, Alone Together: Why We Expect More from Technology and Less from Each Other, New York: Basic Books, 2011, 25.

Alan M. Turing, “Computing Machines and Intelligence.” Mind, Vol. LIX. No. 236, 1950.

Center for Humane Technology and Josh Lash, “The Attachment Economy Is Here. We’re Not Ready,” Center for Humane Technology Newsletter, January 28, 2026.

Ibid.

Ibid.

Seems analogous to the Jevons Paradox: When a new technology ends up taxing resources more than the old technology because the increased ease and efficiency of the new technology makes it so popular that demand dramatically increases. Classic example is how the steam engine overburdened coal reserves. Or more aptly: Inaugurated the Anthropocene by speeding up climate change.